Artificial Intelligence and Public Policy

Will A.

Will A.I. make our government smarter and more responsive – or is that the last step towards the end of privacy? As chief scientist of U.S. Government Accountability Office, Tim Persons conceives its vision for advanced data analytics. Learn about the promise and challenges around government A.I. and what those portend for private sector companies.

Dr. David A. Bray began work in public service at age 15, later serving in the private sector before returning as IT Chief for the CDC’s Bioterrorism Preparedness and Response Program during 9/11; volunteering to deploy to Afghanistan to “think differently” on military and humanitarian issues; and serving as a Senior Executive advocating for increased information interoperability, cybersecurity, and civil liberty protections. He completed a PhD in from Emory University’s business school and two post-docs at MIT and Harvard. He serves as a Visiting Executive In-Residence at Harvard University, a member of the Council on Foreign Relations, and a Visiting Associate at the University of Oxford. He has received both the Arthur S, Flemming Award and Roger W. Jones Award for Executive Leadership. In 2016, Business Insider named him one of the top “24 Americans Who Are Changing the World”.

Dr. Timothy M. Persons is a member of the Senior Executive Service of the U.S. federal government and was appointed the Chief Scientist of the United States Government Accountability Office (GAO) in 2008. In addition to establishing the vision for advanced data analytic activities at GAO, he also serves to direct GAO’s Center for Science, Technology, and Engineering (CSTE), a group of highly specialized scientists, engineers, and operations research staff. In these roles he directs science and technology (S&T) studies and is an expert advisor and chief consultant to the GAO, Congress, and other federal agencies and government programs on cutting-edge S&T, key highly-specialized complex systems, engineering policies and best practices, and original research studies in the fields of engineering, computer, and the physical and biological sciences to ensure strategic and effective use of S&T in the federal sector.

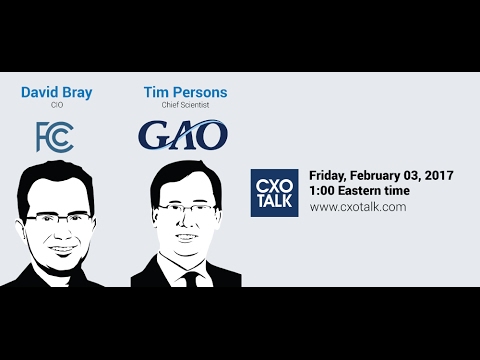

Michael Krigsman: Welcome to Episode #216 of CxOTalk. I'm Michael Krigsman, I'm an industry analyst and the host of CxOTalk, where we bring truly amazing people together to talk about issues like the one we're talking about today, which is the role of AI and the impact on public policy; or maybe I should say, the impact of public policy on AI. Our guest today, we have two guests actually, are Tim Persons, who is the Chief Scientist of the General Accountability Office of the United States Government, and David Bray, who has been on CxOTalk many times, the Chief Information Officer of the Federal Communications Commission.

And David, let’s start with you. Maybe, just introduce yourself briefly.

David Bray: Sure! Thanks for having me again, Michael. So, as you mentioned, I’m the CIO at the FCC, which means I try to tackle the thorny IT issues we have, internally as well as with our stakeholders, and work across the 18 different bureaus and offices, and right now, the three commissioners that we have that are from both parties.

Michael Krigsman: And, Tim Persons, you’re the Chief Scientist of the GAO. Tell us what the GAO is and what it does, and what you do there?

Tim Persons: That's right, Michael. Thank you. Thanks for having me on, it's great to be at this venue and welcome everyone. I'm Tim Persons, I'm the Chief Scientist of the GAO, and I'm here to essentially support Congress in any of the various STEM-like issues that face the Congress. GAO is one of the few congressional agencies. We actually changed our name in 2004 from "General Accounting Office" to the "Government Accountability Office," and that was a subtle change, but an important one, to be able to reflect the broad remit we have and the focus on accountability, which includes financial accounting that has been our bread and butter. But we now do a lot of performance auditing and analyses on things like Return on Investment, pro-bono evaluation, and things like that, for both Senate and House, being we work for 100% of the House and the Senate committees, and anywhere between 75 and 85% of the subcommittees. So, a broad remit indeed, and I do soup-to-nuts science in that domain, including data science and other issues.

The importance of GAO is just that we're the oversight, insight, and foresight analytic arm of the US Congress. And so, in that regard, we do that ongoing, day-to-day oversight. If any of you are familiar with or like to watch C-Span and various venues, there might be hearings of a panel on this-or-that, and you normally see [...] witnesses from the federal agencies. And, our job is to help support that oversight, but also, more importantly, how to do things, how to achieve better government. That is the endsight piece that we work with, as well as the foresight, which is things to come and the implications there. And so, in that regard, I even lead a small group of scientists and engineers...

Michael Krigsman: Fantastic.

Tim Persons: … who do a lot of that sort of thing to support these broad studies that Congress needs to hear about.

Michael Krigsman: So, you know, I think many people may not have heard of the GAO, the Government Accountability Office, and when I used to study, there was a period I was studying and writing very extensively about IT failures, and the quality of the research and the oversight that was put out by the GAO was just simply excellent. So, it's worth looking at the GAO website, because it's an important part of the government in its oversight capability and mandate.

Tim, why is the GAO interested in AI, and the implications for public policy?

Tim Persons: Right. Great question, Michael. And as you mentioned, all of our studies are on gao.gov. So, AI is an emerging, and emergent technology. It has very disruptive implications, and most of you all know that's a business term, and the idea of "disruptive" changes the way we think and do things. And, the U.S. government, for all of its challenges in certain areas, also is a leading purveyor of innovation and sponsor of these sort of things. You could think of the great advances that NASA brought about, for example, or things out of the services, the armed services and so on, and many other things the U.S. does to help sponsor and promote innovation; and AI has been one of them ever since the concept came up in a workshop in Dartmouth in 1956.

So, the IT has been around, but the U.S. government has been a primary investor in it, even though we now see a lot of private industry and money is going into things now to solve problems. It's because of the profound implications brought about by AI, and the need to help the Congress work in a more proactive manner rather than a reactive manner. I typically like to say that most technologies oftentimes have a scary initial feel to them, oftentimes driven by the science fiction or the fun narrative of things. And AI is no different than that. Most of the public that you think about think about AI in a negative context like Skynet on the Terminator series or things like that.

But, there is a lot of "The Art of the Possible" and a lot of promise and potential in this as well. And so, I see it as my job to discuss the opportunities and challenges as well as the policy implications, and AI is a perfect time and a perfect place to do that.

Michael Krigsman: And David, you’re also keenly interested in the policy aspects of AI, so maybe tell us about that interest.

David Bray: Sure! So, at the FCC, when I arrived in August of 2013, we had 207 different IT systems all on-premise, consuming more than 85% of our budget. And if you looked at where the world was going, with the Internet of Things, with machine learning, and yes, with AI, that just was not tenable. And so, in less than two years, we moved everything to public cloud and a commercial service provider, which as a result, has reduced our spending [on data] from 85% of our budgeting systems to less than 50% on a fixed budget. But more importantly, we reduced the time it takes to roll out new services, and new prototypes of offerings to the public that the FCC does, from being 6-7 months, to if you come to us with new requirements now, we can have something in less than 48 hours.

Now, I say that cloud computing is the appetizer for the main course, which is beginning to make sense of all the data that the internet of things would be collecting. The only way you can do that is with a combination of machine learning, and what some call AI and what we're getting out there as well. We've got to have a way of dealing with the tsunami of data that's going to be coming in and be the trusted broker between the public, as well as public-private partnerships so that as a nation and as a world, we can move forward. What experiments can we begin to do that show its benefit to making public service more responsive, more adaptive, and more agile in our rapidly changing world?

Michael Krigsman: Tim, what do you think about this notion of experiments with AI to show what is possible and the benefit that it can bring?

Tim Persons: Yeah, great question! I think it's a without which, nothing. I think if you don't have a sort of experimental ... and I'm an engineer and scientist by training. So if you don't have this experimental, "Let's build safe spaces," as I'll call them; mechanisms to [...] the technology and do these things, as is happening in various areas and elements of AI, then I think you just can't proceed forward. I don't see where you could possibly innovate without the ability to safely fail, learn quickly, iterate, recycle, and move forward.

Michael Krigsman: You know, it’s funny you talk about that, and one thinks about these things, “failing fast,” I don’t like that term, but “fail safely,” “experiment,” “iterate rapidly,” one thinks about that as being in the private sector. Does the government have the ability to be agile in this way?

David Bray: So, I would say that takes from leadership. Whether it's a good Chief Scientist or a good Chief Information Officer, I think our job is to make the case to the secretaries or the heads of our agencies as to, "Yes, we need to keep heading with the trains running on time for these things." But, if we only keep the trains running on time and we don't innovate, you'll get to where I got into a situation at the FCC where they had everything on-premise, their IT on average was more than ten years old, and they had fallen behind. And so, the private sector knows this because if they don't keep abreast of what's going on in the private sector the same, and agile, and nimble, they fall behind and eventually go bankrupt.

I think the same thing is true in the public sector, which is if we don’t, 1) We’ve got to keep the trains running on time, but then 2) Doing experiments to deliver services differently and better, then we will fall behind. And so, the art of a good C-suite officer to their secretary and their head-of-agency, is to make the case as to, “Here are the things that we’re going to deliver, here are the things that we’re going to try and pivot and learn from, and I’ll be the human flight check if you can move that forward.”

And I think that's true for any organization. That's part of the job that Tim does, that's part of my job at the FCC… Other CIOs are out there; you don't often hear from them. But, they are trying to do delivery with results differently, and better to their leadership. And, it's especially key right now because we have a hiring craze from most of our employees, and the only we way we can deliver results differently and better, is if we figure out ways to make people more productive, and that gets to machine learning and AI.

Michael Krigsman: So Tim, any thoughts on this?

Tim Persons: Yeah! You know, I was going to say … I think David said it very well. I think it does take that key leadership. I mean, people don't get elected and appointed in DC by saying, "I'm going to fail on this sort of stuff." You know, no one likes to say, I mean naturally, we don't like to do that, but that is the way innovation comes about regarding, "Let's try this. Okay, that doesn't work out. Let's try that." You always try and make best efforts on that. It does not intend to, you know, make a colossal mess of things, but I think there's a reason that for all of our innovative, high-risk agencies that have shown success over decades of various [...] technologies; I grew up, for example, in the era of the space shuttle, and that used to be very cool and innovative. But, that took a lot of testing by the NASA enterprise around the country in all the various centers. It wasn't like you threw a bunch of things on the launchpad, and then hit the "launch" button with people inside of that. We've obviously had painful national tragedies with that as well, even with best efforts, but that is where the incredible amount of innovation and advances that we can [...] … Just picking on the space program, I'm not even going into the weapons programs or the other civilian-side things, and things like, for example, what David's doing.

Michael Krigsman: We have a question from Twitter, and Arsalan Khan is kind of getting to the heart of the matter, and he wants to know, "What can we use AI for? For example, can you use it to assess government contractor proposals?" So, where are we, regarding a practical use of AI?

David Bray: Great question! One that I’ve been trying to beat the drum on. There actually is already, right now, not in government, it’s actually a public competition to see if anyone can write a machine learning algorithm that will evaluate real state law as well as a real state lawyer. And so, that’s about 75% accurate at the moment. As we know, California already is using machine learning to set bail decisions, and that’s interesting because we can identify biases in historical bail decisions, but can also weed out things that should not matter to your bail hearing like your height, your weight, your gender, your race.

There already is a success, for example, in using machine learning to grade papers at the third-grade level, so find the same sentence mistakes and grammar mistakes… And so, yes. I think, can we have faster acquisition; because now, it’s sort of complementing the human that is reading through these very long contracts and making sure there are actually legally-approved, and they can be used.

I'd also love to actually see AI actually be used to try and identify ... Where can you identify the most effective employees in the workplace as well as those that are maybe being underutilized and can be used better? I'll defer now to Tim, because part of what makes GAO so wonderful, is that they do both accountability, as well as experiments.

Tim Persons: Right. Thanks, David. And we just did, just to piggyback on that, we just issued; and, I'm just showing a little bit for the camera; but, this is a report, it's fully downloadable on our website, gao.gov; you can just use Google or your favorite search engine, GAO 16-659SP. Anyway, it's our strategic study we did on data and analytics. And I was just talking about data analytics and innovation, what's coming out of this. And, David and I think of these terms in terms of categorizing the advances of data and analytics, and as it moves towards AI; and really the overall datafication of the US federal government. And, starting now, there actually is a law. It's called the Digital Accountability and Transparency Act, or the DATA Act - to those of you who aren't in the know, DC likes to come up with clever acronyms that embody the issue of stuff; and so, this is one of them. And, the DATA Act is really just saying, "Look. Federal agencies and departments, you are required to publish your spend data out in a standardized manner that you can now have data analytics coming up."

But, these are the initial steps that are necessary for the algorithms to be able to not only collate the data but then start to do the intelligent work on it that David was referring to. I mean, right now we're at spend, but exactly what he was saying about HR data, things like, "How do we more critically identify our problems, and have really a more empowered management approach to various federal agencies?"

So, just the day-to-day administration of the government, I think big changes are coming. I'm excited about those sort of things, but obviously, there's a lot of control issues. There are indeed technical issues, and there certainly are policy issues going on.

Michael Krigsman: And, what are the policy issues that come along with all of this?

Tim Persons: So, yeah. Go ahead, Dave. Do you want to take that first?

David Bray: Well, I'm just like, "Where do you even begin?" I think it is … It's actually … I think the "P" in policy is more for "people and workforce." You have to remember, if you go back to 1788, and James Madison wrote in the Federalist Papers #51. He said he wanted ambition to counter ambition. And the reason why is, "what is the government itself, but the greatest of all reflections on human nature? If men were angels, no government would be necessary.” So the system of checks and balances that prevents any one person from having too much influence too quickly across the large public service enterprise.

The challenge with that is AI does cut across the enterprise. It is transformative. And so, we have this system of checks and balances that I think are good, it's what actually keeps our nation moving forward as a republic. And at the same time, you have this exponential change being brought on by data; through the Internet of Things, through AI, etc. And so the question is: How do you take an organization that was intentionally designed to have checks and balances, and have it move forward with speed, in a way that does bring people on? I think it's also the question of, most of the workforce of public service, and I don't believe this is the case of Tim or myself, or even 20% of the people I know in public service.

The premise was: You come in, you move things forward incrementally, you keep the boat afloat regardless of who’s president, and that’s your proposition. Now, what we’re asking him to do is something that’s game-changing, that’s much more like the private sector, except we don’t have an IPO and we don’t have the same financial incentives of if you do a really good job, you can do your initial public offering.

And so, how do we motivate employees that are in a workforce that was hired for one reason, that is to keep the nation moving forward and encourage them just to keep the nation moving forward, but now think completely out of the box and be transformative.

Tim Persons: Yeah. And I would just add that on the policy side of things, part of the era of big data, and data analytics is challenged by how powerful it actually is. There are studies like at MIT at Cambridge, at UC Riverside, and so on, all showing that just with sparse information, probably out there on places like Facebook, four or five "likes," you can profile a person without knowing anything about them with very high fidelity on various things. So actually, it's almost too powerful in one sense, and so, it does invoke this issue of how do you mitigate against the PII risk, you know? The personally identifiable information where you can resolve individual citizens. We are a constitutional republic. That means we care about individual civil liberties and privacy rights, and so on, and so that's one of the big issues. It's going to have to be dealt with moving forward.

On the cultural side, I think David put his finger on some key things, which is just we have to think totally differently here in the public sector. I would assert it applies to the private sector as well. But, just the idea of thinking algorithmically about things that we normally have taken for granted. And AI makes it so we have to think as a computer does, even though we want to train it to do something that, you know, and David and my decades of life, we have a lot of inherent knowledge that we didn't have to sort of program in. We picked it up over time. But now, there are opportunities to think about this: What are these things that tend toward helping with greater efficiency and success, and yet, still [doesn't] violate constitutional principles?

Michael Krigsman: So, it seems to me you're raising two issues here. One is the issue of the role of public policy regarding supporting AI, or conversely, it can inhibit the use of AI, the expansion of AI; and number two, is the cultural dimension: How do we learn to think algorithmically? So, how do we change our thinking patterns to take advantage of these new technologies?

David Bray: I think that hits the nail on the head, Michael, that I try to use the words "public service" as opposed to "government" nowadays because the time that it takes to send information between Topeka Kansas and Washington DC is no longer four days on horseback, it's now milliseconds. And so, the way we used to do things, we had to account for communication maybe being slow or delayed, and coordination being difficult. That's no longer the case, and so maybe, there are things that we can involve the public, and the public can do directly, without requiring government professionals. Maybe there are things we can do as public-private partnerships, where parts of the private sector are thinking beyond just our own individual bottom line, but are also thinking about local or national impacts.

And so, the last thing we want to do when we move to these technologies, whether it be the cloud, the Internet of Things, or machine learning in AI, is to take the old way of doing things and just replicate it there. We're actually talking about wholesale experiments on how do we deliver results differently and better, given these new technologies and what they create as being possible.

Michael Krigsman: And Tim, your thoughts on that?

Tim Persons: Yeah, I think that it absolutely is the policy will often evolve after the technology does if it just comes upon. And I think people are rightly concerned about the, well, let's just not knee jerk and regulate on something, and sort of kill the innovation in the cradle, so to speak. And so, I think there's optimism, though. There's good news: We have a view right now in Washington where there's actually … It's more open on this particular issue. It's being led by some of the more near-term innovations of things; I'm thinking in particular about autonomous vehicles; our department of transportation, when you talk about our National Highway Traffic Safety Administration, or NHTSA, as we call it in DC.

Is there a safety regulatory body? And yet, they're being proactive with the Wamos, and the Ubers, and even the various car manufacturers on how can we get this right and how can we test this, as well as; how do we do this so that we're not just issuing a rule that comes out and effectively kills US competitiveness on that? And so, you know, I'm not Pollyannish about this, but I do think there's a posture of recognition that we need to allow for some managed risk in this innovative process, without killing any ideas, and yet trying to be as safe as possible.

Michael Krigsman: Who is responsible for striking this balance? How does it get done? And again, we're talking about AI, and we want to create an environment that fosters AI. And yet, at the same time, people have concerns and want to have certain types of controls in place; and so, that balance, and correct me if I'm wrong, is essentially the province of government policy.

Tim Persons: Right. And in this case I mean to speak to, it's one of these things our government has set up to diffuse power and to have various elements take care of their relevant mission spaces, okay? So let me say it a different way. It's going to devolve to the departments and agencies, so it's going to be context-dependent. Department of Defense is going to care about AI regarding warfare, and what's allowable concerning engaging in warfare, and there's no appetite to just turn over your machine to go and do things so that it's not just doing national security things willy-nilly.

On the other hand, you may look over on the healthcare side of things, Health and Human Services is going to want to regulate. And they care about the health information privacy laws, or the HIPAA act, which is how your and my personal information, our medical information is kept private. And yet, we still may want to be able to utilize these tools to aggregate data, come up with quicker, better, faster, cheaper diagnostics and treatment options for whatever maladies that may come our way. And so, you're going to see evolution in the various departments based upon their particular mission.

Michael Krigsman: David, you look like you’re nodding in furious agreement.

David Bray: I’m in great agreement, and it’s probably best that Tim answers that one, since he’s at the GAO, so…

Michael Krigsman: [Laughter]

Tim Persons: Well, I mean, I think even FCC, right? You want to manage various issues and things like that. I think AI, for FCC, it’s a customized-type thing. There’s not a generalizable AI, where we’re going to say, “Here’s this thing,” and it’s going to apply across the board. These things are going to be highly sophisticated and contextualized in whatever we’re asking them to do.

David Bray: Agreed. I think that’s key to our republic. Our republic, as Tim said so eloquently, does aim to defuse power to the specific missions of the departments and agencies so they know context best. And so, what I would say, with looking at experiments from machine learning and AI is context, context, context.

Tim Persons: Right.

Michael Krigsman: Again, I keep coming back to this point: What are the government's role and that of the public, because we're talking about public policy?

And let me also mention that you are watching Episode #216 of CxOTalk, and we're talking about AI, artificial intelligence, and public policy. We're speaking with David Bray, who is the Chief Information Officer for the Federal Communications Commission, and Tim Persons, who is the Chief Scientist for the General Accountability Office of the government; which, by the way, does amazingly excellent work, analysis, and research, if you're not familiar with it.

And right now, there is a tweet chat happening with the hashtag #cxotalk. So, please join us on Twitter, and you can ask a question as well.

So, getting back to this issue of the role of government and policy, where are we today? What's the status of policy and AI, and where should the policy domain be going, on AI?

Tim Persons: So, let me just talk briefly about the government role, because this is in some sense, speaking historically, there's the "What has been," and "What is now," and "What could be moving forward." There's always been a general agreement ever since the post-WWII; Vannevar Bush you know, science, in the interests of society memo that he put out, which is really profound regarding establishing the National Science Foundation, and several of our basic research enterprise elements as we know it today. Van Bush, when he was writing about that, was really just saying, "You're investing early-stage science," some might call it "a thousand flowers bloom," you sort of just sprinkle seeds of ideas, relatively low money; although aggregated it could be large money. But, you try things out with our universities and our basic labs and things. Don't we have a great innovation to do that?

Absolutely no controversy, really. That’s bipartisan-supported - the idea of doing that. And it takes a lot of the risk out of just expecting the private sector alone to just sort of explore those sort of things when there’s a high degree of failure in those sort of issues.

Moving forward, though, the key thing oftentimes gets into the, well, creating what I would call an “infrastructure for innovation” so that if entities want to try and develop, how do they de-risk things as they look to scale in manufacturing and other particular areas? And so, the government, where it’s debated about the extent to which the government projects better, or relies only on the private sector. But there are things like in the manufacturing-innovation side, like the National Network for Manufacturing Innovation, as an example of trying to bridge that gap in manufacturing innovation.

And then, when you look to sort of where it’s operational, then that’s where the government comes to regulatory rule-making. So, you’re going to have that there. We want, if it’s competing in a marketplace, you want it to be an even marketplace, or a level playing field. If it’s operating safely, like I mentioned NHTSA earlier, you want to have safe operations so that autonomous cars aren’t, you know, running over living things or doing bad stuff, and crashing and all of that. And so, those are key things the government has. But other than that, you want to be able to create the innovative environment for the economy to move forward, create jobs, and allow for growth.

Michael Krigsman: We have a very interesting question from Wayne Anderson, and he directs this to Tim Persons, who is the Chief Scientist of the General Accountability Office. It’s a hard question. He asks, “In a world where AI innovation may not succeed, how do you define ‘investment efficiency’?”

Tim Persons: Yeah. Great question, Wayne. The answer is … Oftentimes what's happened historically is when you invest in this, and AI has had - I mentioned this in a [...] workshop, but we're talking about decades of, and likely billions of dollars put into basic research across the various elements, whether it's medical, basic research at NIH, or whether it's DARPA at defence, or NSF, and so on. It is a good question about, "How often, or how long do we put money into that, and when do we declare defeat, and maybe do something else?"

The short answer is that there is no macro, overarching center of authority who sort of determines that. The closest thing in the executive branch is the Office of Science and Technology Policy, whose previous director, Dr. John Holdren, who was appointed by former President Obama; and he's there to often coordinate and facilitate, but oftentimes not dictate and tell, for example, the Department of Energy what they may, or may not do in their research portfolio; or the NSF, or different things. He's very influential, or he was. However, that's not the same thing, again, as this top-down. It's usually more diffuse and left to the different agencies to do.

So, stopping and starting is, again, another one of those contextualized things. There is no central authority on all of these issues. I think the good news for AI is that I've mentioned the decades and billions. I think we have and will continue to see, innovation and fruit come out of that, and I think that's cause for cautious optimism in terms of the various things moving forward.

So, I think the key question is when should the government start funding something, assuming private industry has already picked it up? And, that’s indeed a greatly debated question that happens in the relevant committees on the Hill.

David Bray: And I'd like to add to that. There's a historical analog. If folks are not familiar, they should look. There was a Project Corona, which was a satellite effort in the late 1950's. And so, this was before we ever had a rocket go to the moon. Basically, ARPA at the time, as well as the Department of Defence and the intelligence community, was trying to launch a satellite that would be able to take photos of Earth. And, that effort had thirteen rocket explosions before they ever even got something up there. And you can imagine nowadays, however, would we be willing to tolerate thirteen rocket explosions before we finally got it? Because, obviously, it paid off; and now, could we imagine living without Google Maps? And in fact, the early predecessor to Google Maps, the imagery it was using was actually from declassified Corona images. And so, this is one of those things where …

You know, how does Elon Musk decide where he's going to focus? He's probably going with a combination of analytics, but ultimately his intuition and his gut. I think the same thing is true with public service, except it is many different people's intuitions and guts, as opposed to one person, and thus a distributed nature. But like Tim said, AI has been through probably about three waves, and we'll probably see another wave after this, and each time, there are going to be things that it will have, that maybe are equivalent to thirteen rocket explosions before they finally pay off.

Michael Krigsman: So we've identified at least two dimensions of policy, it seems to me, during this conversation. Number one is the economic investment policy, given the fact that it may not succeed, but it does hold a great deal of promise. We're talking about AI, but this could be true of any advanced technology such as flights to the moon, as David was just alluding to. And then the second is the role of government policy in terms of regulating AI, or creating a legal and regulatory environment that either supports the development of AI and its proliferation or inhibits it. Is that a correct statement of the two dimensions of policy that we've spoken about?

David Bray: So, this is where I will switch my hats and put on my Eisenhower Fellow to Australia and Taiwan [hat], and I’d say “yes” on that second part talking about what one might be able to do with rule-making. Both Taiwan and Australia are recognizing that with new technologies like the Internet of Things and AI, traditional notions of rulemaking may not be able to keep up with the speed.

And so, personally, I don’t have any answers, and I’d be interested in Tim’s thoughts. We may need to do experiments, in fact, on how do you even keep up with the speed of these technology changes, because the old way that was done may not be sufficient.

Tim Persons: Sure, I agree with that. I think there’s going to need to be just innovation in the rulemaking process. A lot of times, it’s deemed to be quite slow in things now, but it’s just because of the federal laws that have been layered over decades of policymaking that make it so, right? There are ways, I think, to garner public inputs, perhaps in this day and age, far more efficiently and effectively than traditional ways that we’ve done. But, the relevant agencies have to get there.

I also like to say, there are certainly, again, clear and legitimate concerns about regulation stifling innovation. But there’s often the case that it’s not thought of. Sometimes, well-thought-out or contemplated regulation can help spur innovation, in terms of, “Look. We know you ought not to do this, so here; let’s design in this particular way to make this system work in this way.” And I think some of the more creative activities I’ve seen are coming from that positive angle as well, not just the “cut all regulation out!” Because, at the end of the day, I don’t think anybody will want zero regulation and it’s completely and utterly a Wild West. At least, I don’t want to ride in an autonomous vehicle, for example, in that context. But, I think that there’s a way to find out what’s that baseline way of doing things, and then supporting efficient solutions to do that, and we’re going to learn. You cannot eviscerate risk all the way up-front in any enterprise. Period.

Michael Krigsman: We have another interesting question from Chris Petersen, who’s asking, “What are the mechanisms or pathways to gaining collaboration across agencies?” And given the fact that you’ve just been describing the context that each agency has its own needs, it seems to me that that will have a tendency to lead towards siloing and duplicative efforts. And so, what are the pathways for collaboration on innovation?

David Bray: So, I would say that, in some respects, you hit the nail on the head, that the Founders originally wanted siloing, and the source of it prevented any one person from having too much influence. But I think that is the challenge we face. These issues with Internet of Things, machine learning and AI, need to cut across, and in fact, do cut across domains. And so, interestingly enough, I'm going to put [this] forward and I'm interested in Tim's thoughts. I think it's easier for agencies to partner with the public sector than it is for them to partner with themselves, partly because there's what we call the "color of money," the funding money. You get into some very tricky rules and legislations that if I used my money in partnership with another agency's money … This is actually when GAO sometimes get called in and is actually trying to account for the funds, and so, I would actually put forward the more interesting model we need to think about.

This is: do we need to look at innovative public-private partnerships that maybe have different agencies contributing to it, but the center of gravity is what the private sector and the different agencies are being brought together and being convened there, as opposed to trying to do something that’s just inter-agency in nature. So, I’ll be interested in Tim’s thoughts.

Tim Persons: Yeah. I know, I totally agree, David. I think that the last president spoke heavily about public-private partnerships. That means a lot to a lot of people; so that itself needs to be thought out in terms of what that means, but there is the art of the possible. Those things have gone on, and I agree. I think sometimes it's easier to connect, I guess, externally with other entities, even private entities, and build those collaborative networks more so than among the federal sector now. Not all hope is lost. There are times when there are formal coordinating bodies set up, either by statute or policy from the White House. There also are informal things, and they also seem very effective, meaning, among the federal entities.

I will speak personally. I participate in the Chief Data Officer-like community. Just in terms of doing things, we just had, in the last administration, a federal-wide Chief Data Officer, and he was excellent and really did a lot to evangelize the idea of data and analytics and what it means, and very powerful indeed. Unfortunately, just the way that we stovepipe things at our agency, the way the budget’s run, the behaviors incentivized, we often are limited in terms of our ability to do that collaborative piece. And there is always an element of, “I needed to do my day job, but then I also needed to coordinate and collaborate. How do I recognize when to do that and build the partnerships to get things done, especially in today’s 21st century, complex, adaptive systems challenges-like issues?”

Michael Krigsman: Very quickly, because we have about just over five minutes left, and there’s another topic that I want to talk about as well. But, very quickly, would either one of you like to offer your prescriptive advice to policymakers regarding how they, and we should be thinking about the role of public policy and AI? Would either one of you like to take that one?

David Bray: So…

Tim Persons: [...]

Michael Krigsman: Okay.

Time Persons: So, I'll do this. I'm going to say; I'm not going to offer a prescriptive [solution]. I don't think we're prepared to do that. I mean, we personally … I just mentioned our data analytics study. We're kicking an AI study just because of the importance of this. GAO will officially come out with some concluding observations on this sort of thing in time. So, I'm looking forward to that.

What I will say, though, is that I think the government does have a key role in partnership, I think as David was elegantly talking about - just the idea of the partnerships that we can build, how we solve things in a collaborative, networked, manner; how we focus on problem-solving, and not just what we can, and can't do sort of things. And, I think that we can create the environment where this overall system together can be arranged to maximize our success in innovation, and AI; and minimize the undesirable outcomes.

David Bray: And I will add to that, and say again; I can't do prescriptive. That's actually not what my role is. But I can say, if you look at the successes we've had with the Defence Advanced Research Projects Agency, it might be worth asking, do we need a civilian equivalent that is bringing together these different agencies, but also working with the private sector? Because if we wait for the trickle-down effect of innovations on the defense side with AI and machine learning to be brought into the civilian sector, we're going to be too slow. And so, we may need to have a civilian equivalent of DARPA. And in fact, interestingly enough, there are some agencies that bring in more revenue than they spend, and they can actually be a source of funding it with no additional tax increases or something like that to run the civilian enterprise for advanced research projects in AI.

Michael Krigsman: Okay. So clearly, one of the messages here is, is there a need for a civilian equivalent of DARPA and the role of public-private partnerships in getting things done? But, before we go, we have five minutes left. I would like to shift gears, and talk about the role of the Chief Information Officer in this age of very fluid and changing technology, and very fluid and changing expectations of the CIO. And I know that historically, the CIO, and CIOs in general, and of course there are many exceptions to this, such as David Bray, but CIOs, in general, have gotten this reputation for being the keepers of the word, "no." The default is, you want something done? "No, we can't do that." Can we do this? "No, we can't do that, either. We can't do anything!" And maybe, that's unfair.

So, thoughts, anybody, on the changing role of the CIO today?

David Bray: So I’ll give my real quick [opinion], and then I’ll defer to Tim. I’ll say …

Tim Persons: [Laughter]

David Bray: In the past, CIOs … There are two types of CIOs, I think, nowadays. There are CIOs that still see their jobs as being Chief Infrastructure Officers and just Chief Infrastructure Officers. And, those are the ones that are more likely to say, "no," if it doesn't fit into their infrastructure. But, I think if CIOs are really doing what they need to do to help the organization stay abreast of the tsunami of the Internet of Everything, the large increase in data, machine learning, and AI, they really need to be thinking about a holistic strategy that is defaulting to "yes," and then using a choice architecture strategy to say, "How do we get there in a way that is innovative, manages the risk, and moves the organization forward?"

And so, you can already see this where you see the explosion of Chief Digital Officers, Chief Data Officers; that’s happening because CIOs are not providing enough strategy as an area. And so, we really need the CIOs to stand up and recognize that first and foremost, they should be partnering with their CEO or their head of the organization, for how do they move the organization forward and keep it relevant for the next five years, ten years ahead?

Michael Krigsman: Tim, please. Go ahead.

Tim Persons: Yeah, no. I totally agree with that. I think we have to view this in terms of … Look, these folks have been essentially the Chief Infrastructural Officers for IT in there, and they have to have sort of a fortress mentality of protecting the data and things, given the rise of the hack and all this sort of stuff that’s going on, and going to continue to go on. I do think that from the CDO perspective, the data officer, it’s now turning from looking at where the CIO may see data as a burden as something I’ve got to protect; the more I have, the more I’ve got to protect; the more it costs me, even if I’m in cloud, I’ve got to buy more commodity storage for it or ship it around, or do whatever. It’s changing where the CDO is being brought in to say, “Look, let’s look at that as an asset. As we datify, how do we find optimization? How do we attack decisions that heretofore were ‘relegated to the gut,’ so to speak, and let’s be data and evidence-based in terms of what we’re doing.”

You know, it is a challenging job. I don’t just want to be disrespectful at all, [but] there just has to be some balance brought into that so they’re not falling into the “CI - No” trap, as I think you’ll about around departments and agencies.

David Bray: If I can get in real quick, for thirty seconds: I think it also depends on where the CIO reports. If the CIO is reporting to the Chief Financial Officer, then you're going to get the "no," because they're thinking about it as cost. If they report to the Chief Operating Officer, you're going to get "no," because they're thinking about risks to the enterprise. If you get them reporting to the CEO or the CEO equivalent of their organization, then they're going to be more risk-taking and more innovative. This is because the CEO, at the end of the day, doesn't want the company to ossify, it does not want it to fall behind; and so, it's actually, to whom do you have [to give] the CIO report?

Michael Krigsman: But, I guess my question here to either one of you is, many CIOs, maybe most CIOs, recognize that they need to be providing a - at least they have it in their mind - the awareness, in theory, that they need to be providing a strategic benefit to the organization. And yet, there's a very big disconnect between that awareness and the execution of that in practice. And so, what advice, or how can we overcome that gap to help CIOs not just think about a partnership with the business, but actually do it in a meaningful way?

Tim Persons: I think David brought up a good point about reporting to the agency head or the CEO equivalent, and so on. I think you have to come from a problem-solving approach. It's the how might we do something, rather than, you know, may we work a permission-based thing. That always matters. Policy and rules are there; there are laws there for a reason. But, we have to say, "Look, here's the problem." And oftentimes, just defining the problem well is in a very important, but often neglects Step One, and then coming up with collaborative solutions which may, of course, invoke reaching out to the private sector, and allowing the CIOs to feel empowered to do that instead of, "What if the risk is becoming too much box-checking," as it were, on, "Okay, I did this; I did this." But that may unintentionally limit you, but [you] make it feel like you're wherever you need to be.

David Bray: And I would just add to that again. It really … I think you'll probably find those that know it, but don't deliver it as much, don't have as strong a connection to their CEO. It's when you're close to the CEO and the CEO's imparting of the things they want to try. And as Tim said, can we be creative problem solvers in the face of the rapidly-changing world? That's when you'll see those CIOs actually be willing to be a "CI-Yes," as opposed to …

Michael Krigsman: Okay. And, on that, this very fast and interesting 45 minutes has drawn to a close.

You have been watching Episode #216 of CxOTalk. We’ve been speaking about AI and public policy, and then a little interlude on the role of the CIO; an interlude at the end, so maybe, it’s not quite an interlude - but sort of an “ending-lude.” And, we’ve been speaking with David Bray, who is the CIO for the Federal Communications Commission, and Tim Persons, who is the Chief Scientist for the General Accountability Office.

Thanks, everybody for watching, and thank you to our guests. We'll see you again next week. Next week, we have a show on Monday and a show on Friday. So, we have two great shows. Bye-bye!